GSA SER Link Lists

Unlocking the Potential of GSA SER Link Lists

What Are GSA SER Link Lists?

In the world of automated search engine optimization, GSA Search Engine Ranker remains one of the most powerful tools for building backlinks at scale. At the heart of its efficiency lies a crucial component: GSA SER link lists. These are curated collections of URLs where the software can attempt to create links, including platforms like forums, blog comments, social networks, trackbacks, and article directories. Instead of scraping targets from search engines on the fly, users import pre-verified lists, dramatically accelerating campaign setup and reducing reliance on proxies and captcha-solving services.

Why Quality Link Lists Matter

A common misconception is that any list of URLs will produce results. The reality is that GSA SER link lists vary enormously in quality. A well-maintained list can mean the difference between hundreds of live links per day and a verification log full of dead domains, failed registrations, and low-authority spam. High-quality lists are carefully pruned, with dead platforms removed and only sites that actually accept submissions retained. They also categorize targets by platform type, allowing for more nuanced tiered linking strategies.

Platform Diversity and Indexing Rates

One of the core advantages of using specialized GSA SER link lists is the ability to control platform diversity. A balanced list might contain contextual targets like wikis and article directories, mixed with lower-quality but high-success-rate platforms for tier-2 campaigns. The goal is not just to blast links anywhere, but to ensure search engines actually crawl and index the created backlinks. Lists that are rich in platforms with good crawl rates—often identified by their domain authority or age—provide far more value than a random collection of never-indexed guestbooks.

Sourcing and Verifying Your Lists

Users can obtain lists through several methods. Some build them from scratch by running GSA SER’s own scraping features over weeks, then exporting and cleaning the data. Others purchase monthly subscriptions from vendors who specialize in updating and verifying targets daily. Regardless of origin, a critical step is running the list through a duplicate remover and a footprint checker. Many shared lists contain identical URLs repeatedly, which wastes verification threads and lowers efficiency.

Custom Footprints for Niche Targets

Advanced users often modify commercial GSA SER link lists by adding custom footprints. For example, if you are targeting a specific language or niche, you can filter the list to keep only domains that match certain keywords or TLDs. This transforms a generic link list into a laser-focused asset that builds contextual relevance signals. The combination of a solid base list and strategic footprint filtering is a favorite tactic among SEOs who want partial automation without losing all semblance of topical authority.

Integrating Lists into Your Campaign Workflow

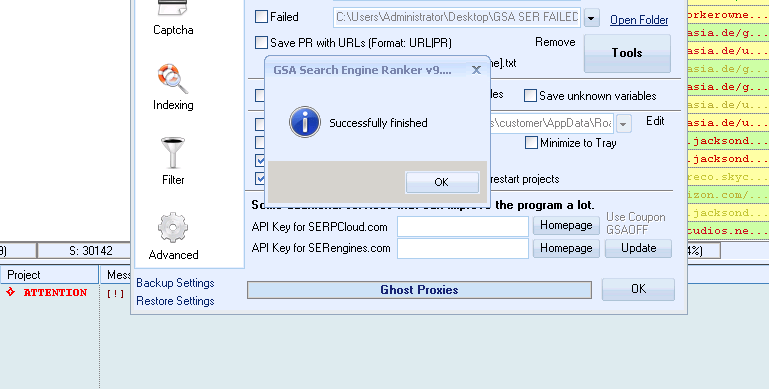

Once you have a reliable list, it must be configured correctly within the software. GSA SER allows you to assign specific lists to different projects or tiers. For a tier-1 money site campaign, you might use a smaller, extremely high-quality list with a low number of submissions per day. For tier-3 link juice amplification, you could load a massive list of thousands of auto-approve targets. The software’s multi-tier scheduler makes it possible to rotate between read more several GSA SER link lists seamlessly, preventing footprint patterns that could trigger algorithmic penalties.

Managing Verification and Retry Strategies

A list is only as good as its verification rate. The best lists include fields for success rates, last-verified dates, and registration modes. Within GSA SER, you can set global limits to stop using a list if the verification rate drops below a threshold, automatically switching to a backup list. This ensures that your campaigns never waste hours trying to post to dead forums. Regular maintenance is still required; even the most premium GSA SER link lists need periodic re-verification as websites go offline, change their scripts, or implement stronger captcha protections.

Avoiding Common Pitfalls

Relying solely on public, widely shared link lists is a fast track to creating a massive footprint that search engines can easily devalue. Blended approaches work best—combine a purchased base list with your own scraped targets and random search engine queries during runtime. This adds enough variation to mimic natural link acquisition patterns. Another pitfall is neglecting the content preparation; even with perfect GSA SER link lists, nonsensical spun comments will result in deletions and low-value links. Invest time in high-quality article bodies, spun at the sentence level, and contextually relevant anchor text sets.

The Future of Automated Link Lists

As search engine algorithms evolve, the nature of link building changes. The era of dumping thousands of exact-match anchor text links on any available URL is over. Modern GSA SER link lists are trending toward tighter integration with AI content generation and smart submission rules that mimic human behavior. Lists are being enhanced with metadata about allowed anchor types, min/max content lengths, and even semantic topics. Those who treat their link lists as living, evolving databases—not static files—will continue to extract genuine value from automated platforms without falling into over-optimization traps.